Automated Content Drift Detection System

The Problem

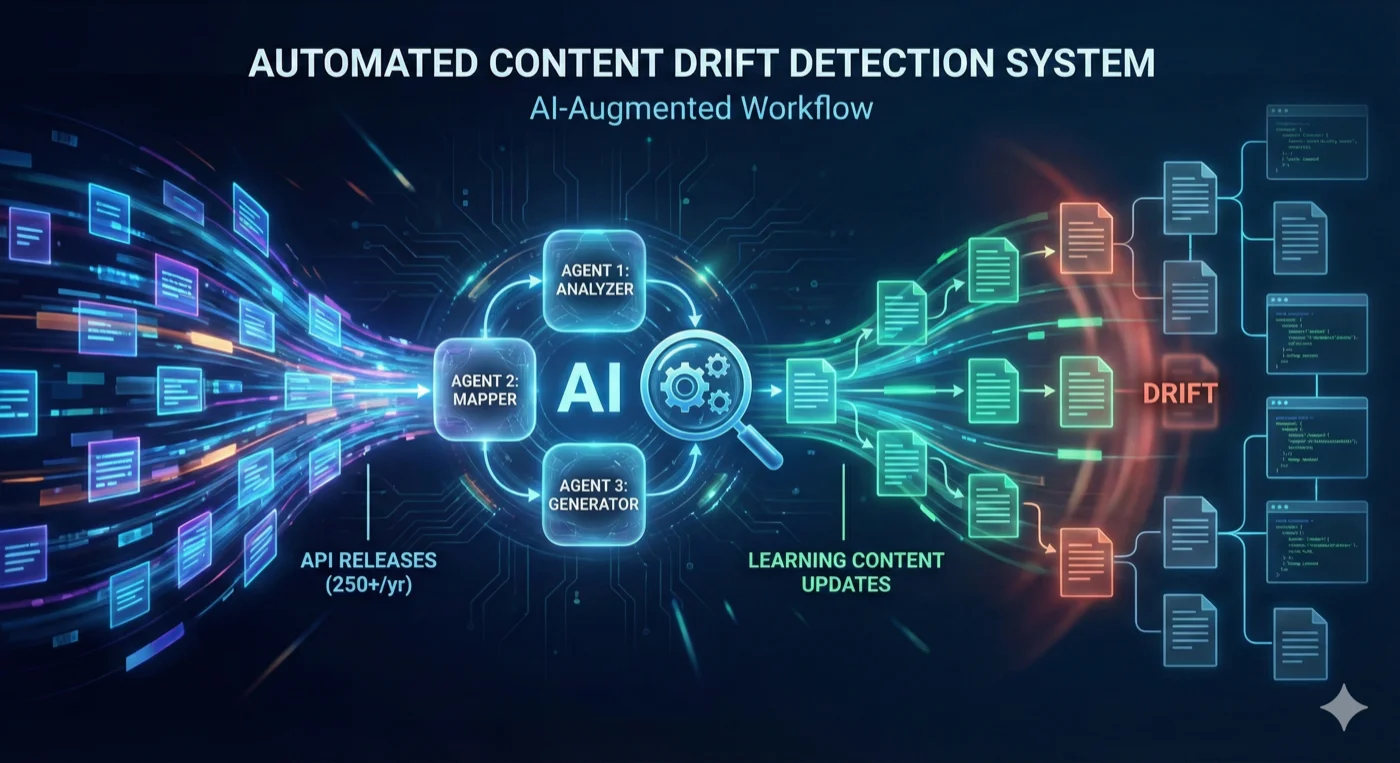

At commercetools, 50+ hours of educational content serves thousands of developers and powers our RAG-based AI assistant. With 250+ API releases annually (5+ per week), keeping this content synchronized was unsustainable.

The crisis: By January 2026, 1,200+ release notes published since January 2024 had accumulated—a 2-year backlog requiring manual cross-referencing against all learning modules. Content drift remained invisible until developers reported incorrect information or outdated patterns—directly impacting AI assistant accuracy and developer trust.

The bottleneck: Content teams lacked capacity for systematic review. Even with dedicated resources, cross-referencing 250+ annual releases against 50+ hours of learning content required comprehensive knowledge of every module and API domain. The backlog accumulated with no process to address it.

The Solution

In January 2026, I designed a three-agent workflow using GitHub Copilot Skills that integrates directly into the content team’s existing VS Code/GitHub workflow—no new tools, no context switching.

Architecture Rationale

- Native integration — Leverages existing developer tools and workflows

- Human-in-the-loop — AI augments decision-making rather than replacing it

- Existing infrastructure — Uses GitHub Copilot’s LLM capabilities without custom NLP pipelines

- Modular stages — Discrete workflow steps enable iterative refinement and error recovery

The Three-Agent Workflow

flowchart TD

subgraph START["📋 START"]

A["📄 RELEASE NOTE<br/>250+ published annually<br/>5+ per week"]

end

subgraph AGENT1["🔍 AGENT 1: RELEASE-ANALYZER"]

B["📝 Analysis<br/><br/><b>Input:</b> Release note MDX<br/><b>Output:</b> analysis.json<br/><br/>• Extract API changes<br/>• Identify affected modules<br/>• Score severity<br/>• Estimate effort<br/><br/>📊 1 release → 0-4 modules"]

C1{{"👤 Human Checkpoint<br/>Review affected modules"}}

end

subgraph AGENT2["🗺️ AGENT 2: CONTENT-MAPPER"]

D["🎯 Mapping<br/><br/><b>Input:</b> analysis.json + content<br/><b>Output:</b> recommendations.json<br/><br/>• Locate sections to update<br/>• Provide file paths + lines<br/>• Generate change descriptions<br/>• Prioritize by impact<br/><br/>📊 4 modules → 8-15 file edits"]

C2{{"👤 Human Checkpoint<br/>Review recommendations"}}

end

subgraph AGENT3["✨ AGENT 3: CHANGE-GENERATOR"]

E["✍️ Generation<br/><br/><b>Input:</b> recommendations.json<br/><b>Output:</b> content-updates.md<br/><br/>• Generate MDX content<br/>• Maintain voice/style<br/>• Preserve structure<br/>• Output git-ready diffs<br/><br/>📊 15 edits → Ready-to-merge"]

C3{{"👤 Human Checkpoint<br/>Approve changes"}}

end

subgraph COMPLETE["✅ COMPLETE"]

F["🚀 Merge to main"]

end

%% Main flow

A --> B

B --> C1

C1 --> D

D --> C2

C2 --> E

E --> C3

C3 --> F

%% Dark theme styling matching site aesthetic

classDef startStyle fill:#2d1b0e,stroke:#f59e0b,stroke-width:3px,color:#f9fafb,font-weight:bold;

classDef agent1Style fill:#1a2234,stroke:#fbbf24,stroke-width:3px,color:#f9fafb,font-weight:bold;

classDef agent2Style fill:#1e1b4b,stroke:#a78bfa,stroke-width:3px,color:#f9fafb,font-weight:bold;

classDef agent3Style fill:#0f1419,stroke:#fb923c,stroke-width:3px,color:#f9fafb,font-weight:bold;

classDef checkpointStyle fill:#1f2937,stroke:#f59e0b,stroke-width:2px,color:#fbbf24,font-weight:bold;

classDef completeStyle fill:#064e3b,stroke:#10b981,stroke-width:4px,color:#f9fafb,font-weight:bold;

class A startStyle

class B agent1Style

class D agent2Style

class E agent3Style

class C1,C2,C3 checkpointStyle

class F completeStyle

%% Link styling

linkStyle 0,1 stroke:#fbbf24,stroke-width:3px;

linkStyle 2,3 stroke:#a78bfa,stroke-width:3px;

linkStyle 4,5 stroke:#fb923c,stroke-width:3px;

linkStyle 6 stroke:#10b981,stroke-width:3px; How Agent 1 Detects & Prioritizes Impact

Agent 1 (Release Analyzer) uses severity-based scoring and topic matching to identify affected modules:

- Breaking changes receive

2×priority (deprecated APIs, removed features, behavior changes) - New features receive

2×priority (GA announcements, new endpoints) - Enhancements and fixes receive standard priority

Release notes include topic tags matched against learning module topics. Candidates are statistically ranked per learning path, with the top 20th percentile surfaced for review—delivering high signal-to-noise ratio.

The Outcome

Impact

| Metric | Value |

|---|---|

| Backlog processed | 1,200+ releases (2024-2026) |

| Pilot scope | 2 learning paths validated |

| Production rollout | All learning paths (2026) |

Transformation

From impossible to systematic: Before this system, content review didn’t happen—teams lacked capacity to cross-reference releases against learning content. Now, context-rich reports with severity scores and effort estimates make systematic review feasible.

From reactive to proactive: Content updates shifted from crisis-driven (“users reported wrong info”) to release-driven (“here’s what changed and where it impacts modules”). Sprint planning replaced ad-hoc firefighting.

From invisible drift to visible impact: Content drift that remained hidden until developers reported issues now surfaces immediately, with file paths, line numbers, and recommended changes ready for review.

Technical Design Decisions

1. Topic Matching Over Vector Embeddings

Chose: Static JSON topic map with severity weighting

Rejected: Vector similarity search using embeddings

Rationale: For human-in-the-loop systems, explainability enables iteration. Topic maps execute in <2 seconds with zero cost, are human-editable, and allow stakeholders to understand and refine matching logic. While embeddings offered potentially higher precision (80-85% vs. 60-70%), they required 2-3 hours execution time and remained black-box—difficult to debug or collaboratively improve.

Key insight: When validation is mandatory, optimizing for explainability and iteration speed outweighs marginal precision gains.

2. Filesystem as State Management

Each workflow stage produces version-controlled JSON/Markdown outputs:

- Resume from any checkpoint

- Audit trail for all recommendations

- Team collaboration via Git

- No database infrastructure

3. Leveraging Copilot Skills

Rather than building custom LLM automation, the system leverages GitHub Copilot Skills’ production-quality NLP capabilities (semantic understanding, MDX generation, voice consistency) without managing prompts, rate limits, or model versions.

Validation Strategy

Pilot-first deployment: Built for 2 representative learning paths—one API-focused (Composable Commerce) and one integration-focused (Connect)—to test different content types and change patterns. Ran analysis on 3 months of releases (60+ notes) to validate detection logic and measure recommendation quality.

Validation results: Pilot surfaced 40+ legitimate content updates that had been missed during manual review, confirming the system detected real drift. Team reviewed all recommendations and refined topic mappings based on false matches, improving precision from initial 55% to 65% before production rollout.

Incremental expansion: Modular design supported path-by-path rollout without technical debt. After pilot validation, expanded to all 8 learning paths in production.

Tunable thresholds: Key parameters (percentile cutoffs, date ranges, severity multipliers) are first-class configuration—enabling rapid iteration without code changes. Team can adjust and re-run analysis in <2 seconds.

Key Insights

1. Augment, Don’t Replace: Identify irreducible human decision points and design around them. The system reduces decision volume by 90%+ rather than eliminating judgment where context matters.

2. Separate Detection from Updating: These require different optimization targets. Detection needs speed and scale (high recall, acceptable false positives). Updating needs accuracy and nuance (zero tolerance for errors). One system can’t optimize for both.

3. Explainability Enables Iteration: In production systems with diverse stakeholders, choose approaches they can inspect and refine. Topic maps (60-70% precision) were chosen over embeddings (80-85% precision) because stakeholders could collaboratively improve matching logic without technical expertise.

4. Audit Infrastructure First: Discovered existing capabilities and constraints before building. Saved ~2 weeks by leveraging GitHub Copilot Skills instead of building custom LLM pipelines.

Future Evolution

Next priorities:

- CI/CD Integration — Trigger analysis automatically on release note commits with GitHub Actions, posting results as PR comments and Slack notifications

- Interactive Dashboard — Visualize module “freshness” metrics, drift accumulation, and workflow progress

- Semantic Topic Expansion — Use embeddings to discover related terms and auto-suggest topic map additions

- Historical Validation — Build labeled dataset for empirical precision/recall measurement and algorithm refinement

Production system maintaining learning content accuracy for thousands of developers at 5+ API releases per week.