The challenge

The grading workload challenge

University educators face an unsustainable grading workload. A single assignment requiring detailed feedback takes 30-45 minutes per student. For a cohort of 90 students, this translates to 45-70 hours of manual marking—often completed over weekends and breaks.

This creates three critical problems:

- Delayed feedback: Students receive grades weeks after submission, when learning value is diminished

- Inconsistent standards: Fatigue leads to grading drift, where papers marked on day one receive different treatment than day five

- Faculty burnout: Repetitive assessment tasks consume time that should be spent on teaching and research

Why this matters for learning

In education programs, detailed feedback is pedagogically essential. Students submit reflective portfolios analyzing their teaching practices through theoretical frameworks. They need specific, criterion-based guidance to develop professional judgment; not just a grade and brief comment.

Traditional solutions fail:

- Teaching assistants cost $50-60/hour and still face consistency challenges

- Peer review lacks the theoretical depth students need

- Auto-graders work for multiple-choice tests, not complex reflective writing

The design challenge

Create a system that maintains privacy, ensures grading consistency, preserves the nuance of rubric-based assessment, and generates feedback detailed enough to support student learning; all while processing 90+ papers in hours instead of days.

The process

Research & analysis

I interviewed faculty members across three education courses and discovered consistent pain points:

Grading inconsistency stems from fatigue, not incompetence. Experienced educators grade the same paper differently on Monday versus Friday. The problem isn’t lack of standards—it’s cognitive load during marathon grading sessions.

Students submit mixed document formats. Some courses require PDFs, others accept Word documents. Many papers contain complex tables showing analytical frameworks. Standard text extraction loses this structure, making papers incomprehensible.

Privacy concerns block AI adoption. Faculty expressed strong ethical concerns about sending student work to third-party APIs, even when tools showed promise. This wasn’t technophobia—it was legitimate concern about privacy compliance and student consent.

Design approach

I applied three core learning design principles:

1. Consistency through calibration, not automation

Human graders undergo “moderation” training where they review exemplar papers at each grade band to calibrate their judgment. I replicated this for AI.

Instead of just providing rubrics (which define what to assess), I created detailed moderation notes describing how to interpret borderline cases. These notes include:

- Exemplar descriptions from actual papers at each grade level

- Common student mistakes to recognize

- Field-specific guidance (e.g., early childhood education vs. secondary education contexts)

This 6,500-character moderation context essentially trains the AI the same way we train human markers.

2. Privacy-preserving architecture

Built a two-phase anonymization system:

- Phase 1: Regex patterns detect and replace student IDs, emails, and names with anonymous identifiers (Student_001, Student_002)

- Phase 2: Secure JSON mapping file (excluded from version control) enables post-grading re-identification

Students’ work never reaches the API with identifiable information. The AI grades “Student_023’s reflective portfolio,” not “Sarah Johnson’s assignment.”

3. Cognitive load reduction for educators

The system doesn’t replace educator judgment—it handles the mechanical first pass. Faculty receive detailed AI-generated feedback to review, edit, and approve rather than writing from scratch. This shifts cognitive work from “generate 90 sets of detailed feedback” to “spot-check and refine 90 drafts.”

Development & iteration

Solving the document format problem

Early testing revealed that PDF and Word documents required completely different processing approaches. I built dual conversion pipelines:

- PDFs:

pdfplumberwith table-aware extraction preserves rows/columns as pipe-delimited text - Word:

mammothconverts to Markdown while maintaining table structure

The system auto-detects file type and routes to the appropriate converter.

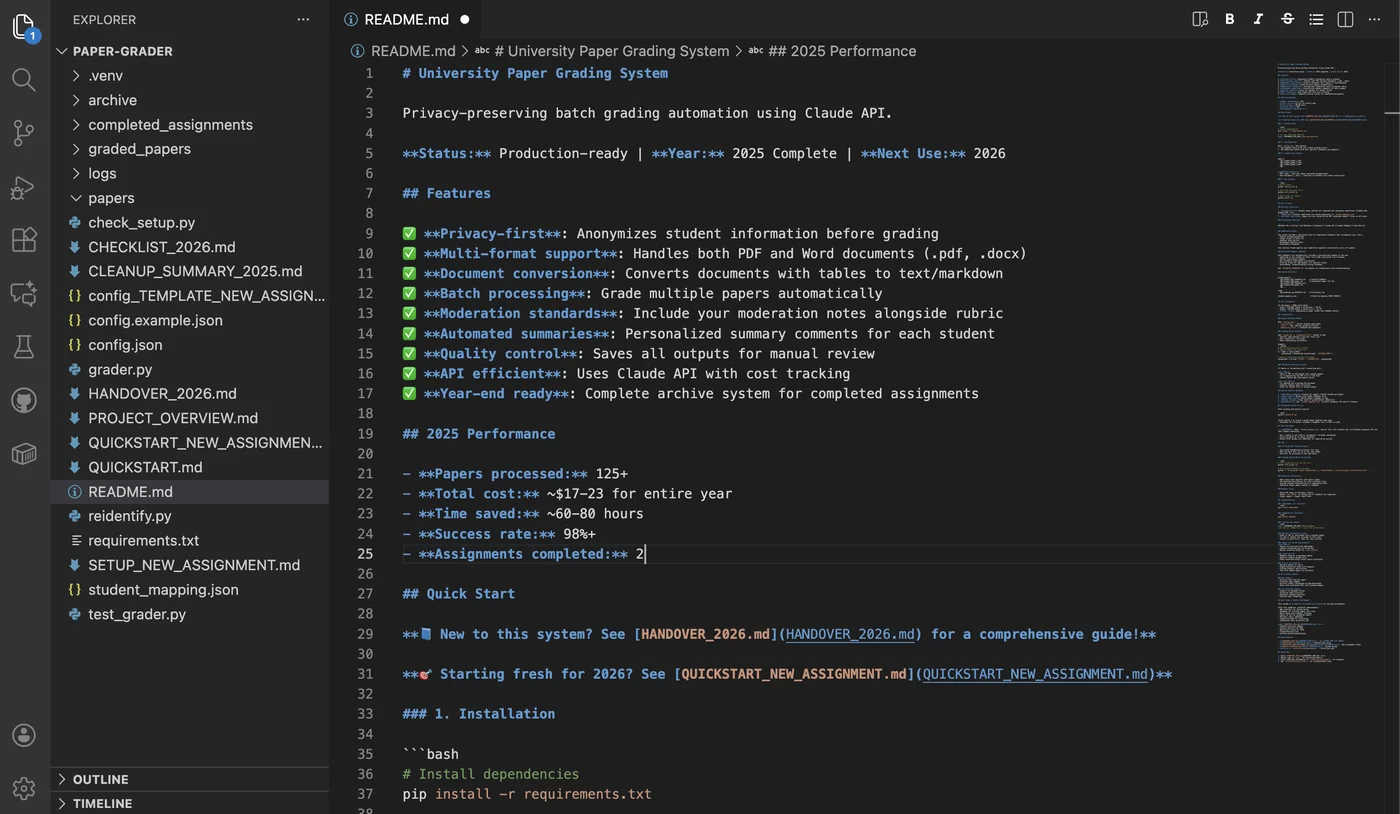

Teaching the AI to grade like an expert

Initial versions produced accurate but generic feedback. The breakthrough came when I treated prompt engineering as instructional design.

I structured the grading prompt in three parts:

- Assignment context: What students were asked to do and why

- Rubric: Criteria and point values (what to assess)

- Moderation notes: Calibration examples (how to interpret quality)

This mimics how universities train new markers—explain the assignment goals, provide assessment criteria, then calibrate judgment through exemplars.

Building quality control workflows

I designed the system to save both graded feedback AND anonymized papers. This enables faculty to spot-check AI decisions against original student work—building trust through transparency rather than asking for blind acceptance.

The solution

System architecture

flowchart TD

subgraph INPUT["📥 INPUT"]

PAPERS["📄 Student Papers<br/>PDF & DOCX"]

CONFIG["⚙️ Configuration<br/>Rubric & Moderation"]

end

subgraph PROCESS["⚙️ PROCESSING"]

CONVERT["📑 Convert<br/>Extract Text & Tables"]

ANON["🔒 Anonymize<br/>Remove Identifiers"]

GRADE["🤖 Grade with AI<br/>Claude Sonnet 4.5"]

SAVE["💾 Save Results<br/>Feedback & Logs"]

end

subgraph OUTPUT["📤 OUTPUT"]

FEEDBACK["📝 Graded Papers<br/>Detailed Feedback"]

MAPPING["🔐 Student Mapping<br/>Secure Identity Map"]

end

subgraph REIDENT["🔄 RE-IDENTIFICATION"]

TOOLS["🛠️ Lookup Tools<br/>Export Grades"]

end

%% Main flow

INPUT --> CONVERT

CONVERT --> ANON

ANON --> GRADE

GRADE --> SAVE

SAVE --> FEEDBACK

SAVE --> MAPPING

FEEDBACK --> TOOLS

MAPPING --> TOOLS

%% Dark theme styling

classDef inputStyle fill:#2d1b0e,stroke:#f59e0b,stroke-width:3px,color:#f9fafb,font-weight:bold;

classDef processStyle fill:#1a2234,stroke:#fbbf24,stroke-width:3px,color:#f9fafb,font-weight:bold;

classDef outputStyle fill:#1e1b4b,stroke:#a78bfa,stroke-width:3px,color:#f9fafb,font-weight:bold;

classDef reidentStyle fill:#0f1419,stroke:#fb923c,stroke-width:3px,color:#f9fafb,font-weight:bold;

class PAPERS,CONFIG inputStyle

class CONVERT,ANON,GRADE,SAVE processStyle

class FEEDBACK,MAPPING outputStyle

class TOOLS reidentStyle

%% Link styling

linkStyle 0,1,2,3 stroke:#fbbf24,stroke-width:3px;

linkStyle 4,5 stroke:#a78bfa,stroke-width:3px;

linkStyle 6,7 stroke:#fb923c,stroke-width:3px; What educators experience

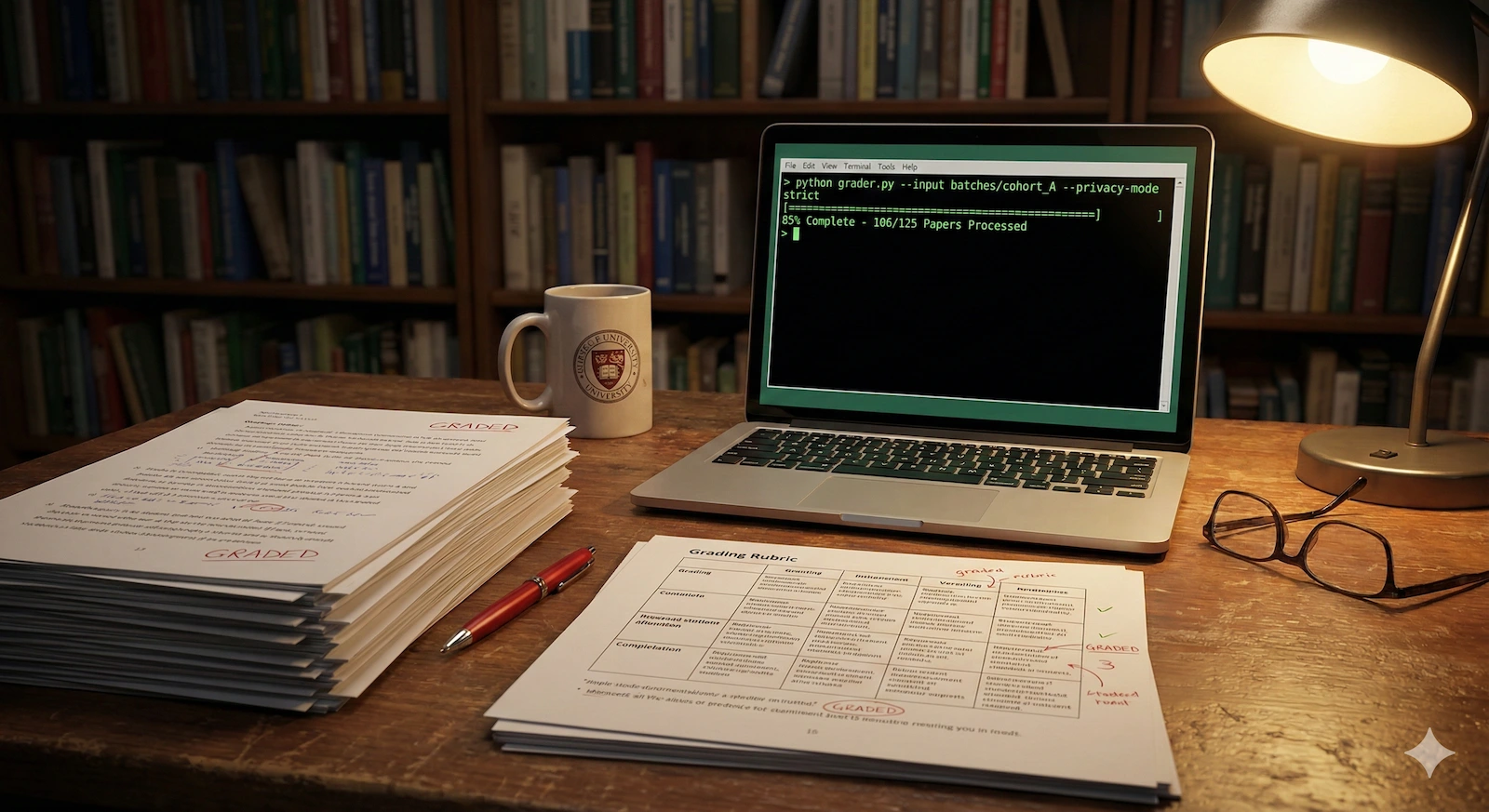

Faculty configure one JSON file with their rubric, moderation notes, and assignment description. They run a quick setup check to validate dependencies and API credentials, then test on a single paper to verify output quality.

Satisfied with the test, they place 90 student papers (mixed PDF and Word formats) into a folder and run the grading command. The system shows real-time progress: “Processing student_paper_1.pdf… Extracting text… Anonymizing… Grading as Student_001… SUCCESS.”

Within 60-90 minutes, they have 90 detailed feedback files to review. Each contains:

- Criterion-by-criterion analysis (strengths, development areas, specific suggestions)

- Grade justifications aligned to university standards (HD/D/C/P)

- Personalized summary addressed to the student by first name

Faculty check the feedback against the papers, make any needed edits, then export grades to their university gradebook.

Results & impact

Measurable outcomes

- 125+ papers graded across two assignment cohorts

- 60-80 hours of faculty time saved per grading cycle

- 98%+ technical success rate with document conversion and grading pipeline

- $17-23 total cost for entire academic year (~$0.14-0.18 per paper)

Quality validation

The moderation notes system enabled the AI to:

- Correctly identify and penalize critical conceptual errors (e.g., incorrect definition of “lazy multiculturalism”)

- Recognize exceptional work with sophisticated critique and specific scholarly citations

- Maintain consistency comparable to experienced human markers

Quality assurance verification: A separate LLM-based QA prompt cross-checked generated feedback against original papers, providing reliability scores for each assessment. This independent validation layer detected edge cases where feedback didn’t accurately reflect paper content, enabling targeted review before final distribution.

Faculty reviewer feedback: “Caught the exact error I specifically flagged in moderation notes. Feedback more detailed than I would have written manually.”

Student impact

Several students noted in course evaluations that feedback was “more specific than previous assignments” and “helped me understand exactly what the rubric meant.” This suggests AI assessment’s value extends beyond efficiency to enabling pedagogically desirable feedback detail that’s practically impossible at scale with human-only grading.

Key takeaways

Privacy-preserving design builds necessary trust. Faculty initially expressed concerns about “sending student work to AI companies.” The anonymization pipeline, combined with transparent documentation of data handling, transformed those concerns into confidence. EdTech AI adoption hinges not just on capability but on visible, auditable privacy safeguards.

AI amplifies pedagogical expertise, not replaces it. The system doesn’t automate judgment—it scales the instructor’s ability to apply assessment criteria consistently across cohorts too large for sustained human attention. The moderation notes component essentially externalizes expert mental models, making tacit knowledge explicit.

Document format diversity is a real constraint. Initially planned for PDF-only processing, but student submission realities demanded Word support. Accommodating existing practices rather than dictating compliance created a more usable tool.

Quality control must be designed into the workflow. Saving anonymized papers alongside feedback enables spot-checking. Timestamp logging enables performance tracking. Clear file naming enables rapid manual review. These weren’t add-ons—they were core to building faculty trust.

Prompt engineering is instructional design. Writing the grading prompt meant defining learning objectives (what quality work looks like), pedagogy (how to provide constructive feedback), and assessment criteria (how to interpret rubric boundaries). Creating effective AI assessment tools requires instructional design expertise, not just technical skills.

Future enhancements

Build the web interface first. Faculty adoption would be higher with drag-and-drop upload versus command line. Would enable collaborative review workflows with multiple markers spot-checking different papers.

Involve students in process design earlier. Built entirely from faculty perspective. Student input on feedback preferences would have been valuable (e.g., do they prefer strengths/weaknesses separated? Do they want specific page references?).

Establish formal success metrics upfront. Informally tracked “Does the grade match what I would give?” but formal inter-rater reliability testing (AI grades vs. human grades on calibration set) would provide quantitative validation.

Change management is as important as technical implementation. The code works brilliantly, but faculty adoption requires trust-building, training, and clear quality control demonstration. If launching at other institutions, I’d spend 40% effort on technical development and 60% on pedagogy-aware rollout strategy.